Imagine teaching a computer not just once, but continuously like how humans learn throughout their life. That’s the big idea behind Nested Learning, a fresh approach from Google Research that could help AI models learn new things without forgetting what they already know.

The Problem: Why AI Forgets

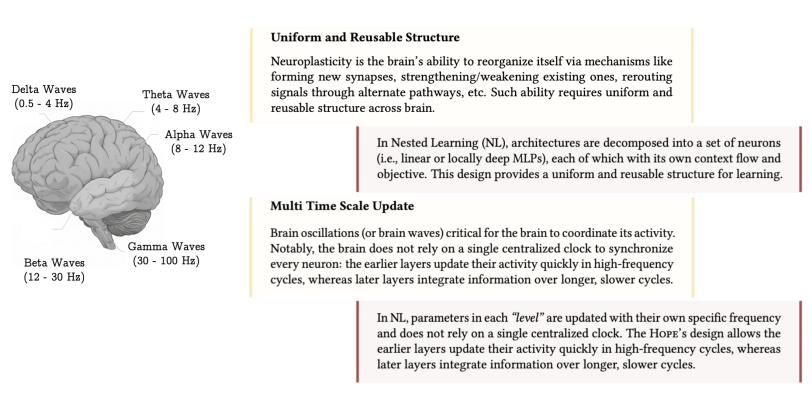

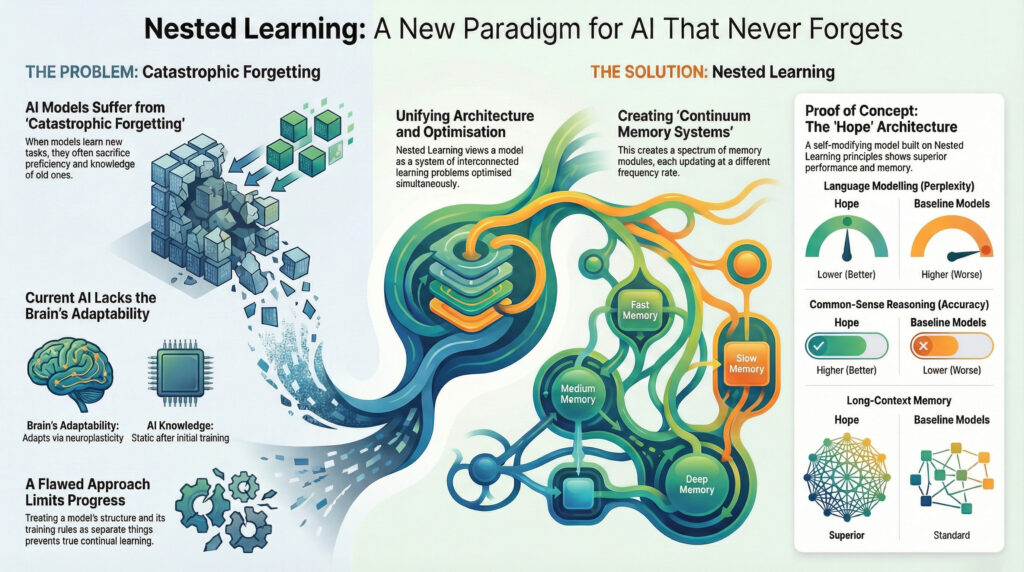

Traditional AI models struggle with continual learning – when you feed them new tasks, they tend to forget earlier ones. This happens because they constantly update their core parameters, overwriting what was learned before. In neuroscience, our brains don’t work that way. Thanks to neuroplasticity, different parts of the brain adapt and update at different rates. That helps us keep old memories even as we learn new things. In contrast, most machine-learning systems treat the architecture (the “shape” of the network) separately from the optimization algorithm (how it learns). Nested Learning challenges that separation.

What Nested Learning Actually Means

Nested Learning reframes a single AI model as a network of smaller, connected learning units, each with its own role.

- Nested Optimization Problems – Rather than one monolithic “learn everything” loop, the model is seen as multiple optimization problems running together or layered on top of each other.

- Context Flow & Update Rates

- Each of these nested problems has its own “context flow”: the information it uses to learn.

- They also update on different time scales – some parts of the model change quickly, others more slowly. This mimics how brains consolidate memories: fast learning + slow, steady updates.

- Unified View of Architecture + Optimization – Instead of thinking of architecture (how big or deep a network is) and optimization (how we train it) as separate, Nested Learning sees them as different levels of the same learning system.

Key Innovations

Google’s team used several clever techniques to put Nested Learning into action:

- Deep Optimizers

- Traditional optimizers (like SGD or Adam) are reinterpreted as memory modules – they don’t just compute gradients, but store and recall information. By changing how these optimizers work (for example, using an L2 loss instead of just simple dot products), they become more robust, especially when dealing with noisy or surprising data.

- Continuum Memory Systems (CMS)

- In standard models, you often have “short-term memory” (recent context) and “long-term memory” (what the model was pretrained on). Nested Learning expands this into a spectrum of memory modules.Each module in this continuum learns at its own pace (its own update frequency), allowing richer retention of information over different time scales.

key components to unlock the continual learning in humans

HOPE Architecture

To prove Nested Learning actually works, Google built a prototype architecture called HOPE (Hierarchy + Experts + Smart Routing):

- It’s a self-modifying model: parts of it can change how they learn over time.

- It uses continuum memory system (CMS) blocks to handle very large contexts (i.e., long sequences of information).

- Hope can optimize not just its weights, but also its own update rules, in a loop kind of like the model “learning how to learn.”

Test results of this architecture are promising :

- It showed better performance on language modeling tasks.

- It handled long-context reasoning much better than existing models.

- In continual learning scenarios, it retained old knowledge more effectively.

The full results available in the research paper.

What This Means for the Future

- Lifelong Learning: Nested Learning is a step in building AI that truly grows over time, instead of being retrained from scratch.

- Flexible Design: Because the paradigm adds “levels” of learning (with different update speeds), future researchers can experiment with architectures that were hard to imagine before.

- Closer to Human Learning: The idea mimics how biological brains work multi-scale memory, different learning rates which could be a foundation for more adaptive and efficient AI systems.

Conclusion

Nested Learning isn’t just a tweak or a new algorithm — it’s a paradigm shift in how we think about training and structuring AI models. Instead of a flat architecture that learns all at once, it treats a model like a layered system, where different parts have their own learning rhythm. That makes it possible for AI to keep learning without discarding its past. Read about LM Studio Image Generation using FastSD MCP Server.